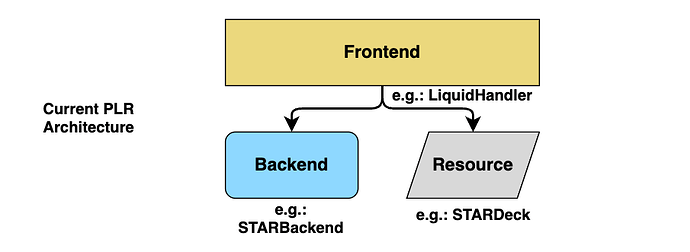

in PLR we have always had frontend classes being the main interface to certain machines, reference to a Hamilton STAR is LiquidHandler, Cytation is PlateReader, cpac is TemperatureController, etc. When you control a star or OT, the reference is always an instance of LiquidHandler.

As we implement more in PLR, two problems are becoming obvious:

problem 1: some machines do not clearly fit into just one of these categories - they act like several machines in one.

One example is many liquid handlers having arms. I am working on adding an SCARA(Arm) class for the kx2 and pf400. I want to re-use the code I wrote in LiquidHandler.{pick_up,drop}_resource for these arms exactly as I do it for the iswap (integrated arm for stars)

A second example of this first problem is the huge number of devices doing temperature control. We want to share the TemperatureContorller.wait_for_temperature method across all: actual heating plates like cpac, heater shakers, incubators, certain plate readers, etc.

We can think about LiquidHandler inheriting from Arm, just adding the liquid handling functions. And Incubators inheriting from TemperatureController. However, in general liquid handlers do not have dedicated arms (like OT2, Prep, Nimbus etc.). This is ugly. LiquidHandler and Arm should really exist on the same level and not depend on each other.

problem 2: Machines front ends are Resources, but not every machine uses the same resource model even if it’s a very similar machine otherwise. The most obvious example is incubators: the SCILA has 4 drawers and positions while Cytomats have tens of internal plate locations but only one transfer station.

(On the topic of “incubators”, they are actually just “plate movers” and oftentimes “temperature controllers”.)

→ it is becoming clear to me that the “simplest possible implementation” of having a LiquidHandler being the frontmost interface (“front end”) for, for example, a STAR is not working anymore. It is simple, but not “possible”. It is too opinionated about certain machines. We need to have a dedicated STAR front end that somehow combines LiquidHandler and Arm. While one unified class per machine was the ideal, we learn through implementations what the standard needs to be.

Before explaining the two proposals, I want to give an example on how PLR being “universal” is currently being used. We have many functions taking machines as arguments, like async def serial_dilution(lh: LiquidHandler, ...) and for byonoy reading:

def read_plate_byonoy(b: Byonoy, arm: Arm, plate: Plate...):

# byonoy requires plate sandwiched in between illumination and reader unit

arm.move(illumination_unit, parking_unit)

arm.move(plate, reader_unit)

arm.move(illumination_unit, reader_unit)

b.measure() # measure absorbance

These are functions (not methods) so they can be shared across protocols and workcells (workcells are often classes).

For the two options of sharing machine front ends across actual machine interfaces:

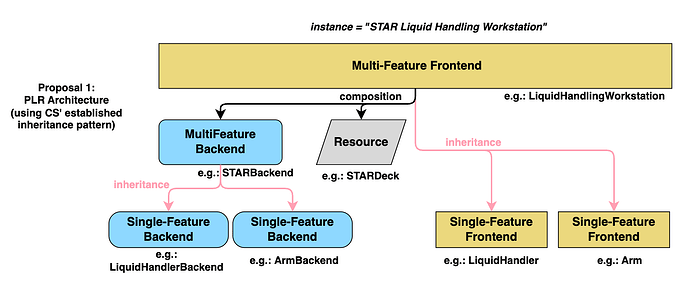

Option 1: Machine resources inheriting from multiple “front ends”

This was developed together with @CamilloMoschner

In PLR, we have “composite front ends” like HeaterShaker(TemperatureController, Shaker). We could follow that pattern and have STAR(LiquidHandler, Arm).

Pseudocode example:

class STAR(Resource, LiquidHandler, Arm):

def __init__(self, ...):

self.backend = STARBackend(...)

Arm.__init__(self, backend=self.backend, ...)

LiquidHandler.__init__(self, backend=self.backend, ...)

...

# simple operations

star = STAR(...)

star.aspirate(...)

star.move_plate(...)

# backend specific methods

star.backend.special_method()

# functions

read_plate_byonoy(arm=star, ...) # STAR inherits from Arm

class SCILA(

Resource,

TemperatureController,

CO2Contorller,

AutomatedStorage

):

...

class Cytomat(

Resource,

TemperatureController,

CO2Contorller,

AutomatedStorage

):

Where I see this running into problems is when a machine has multiple “machines” with one backend. For example, a Tecan EVO can have two independent gripper arms. The proposal for this is to use backend_kwargs:

# Evo inherits from Arm but has two arms

# use_arm is a backend_kwarg for EVOBackend.move_plate

read_plate_byonoy(evo, ..., use_arm=1)

This is extremely awkward since we don’t even know where to send the backend_kwarg in read_plate_byonoy. You’d have to have read_plate_byonoy (..., arm_kwargs: dict, reader_kwargs: dict).

You can make the argument here that other methods in the function will also need backend_kwargs and so we are already forced into this pattern. I think that’s not necessarily always the case for properly written functions and if our abstractions for the actual machines are good. The byonoy reading abstraction using a robotic arm is a good counter example to backend kwargs being needed. It should be easy to express these easy things with PLR.

Additionally, it makes the following more difficult: some machines optionally have features. Like some cytomats are just plate storage, they use the same API for that but lack environmental control. Would we have CytomatWithEnvironment(..., TemperatureController) and CytomatWithoutEnvironment(...)?

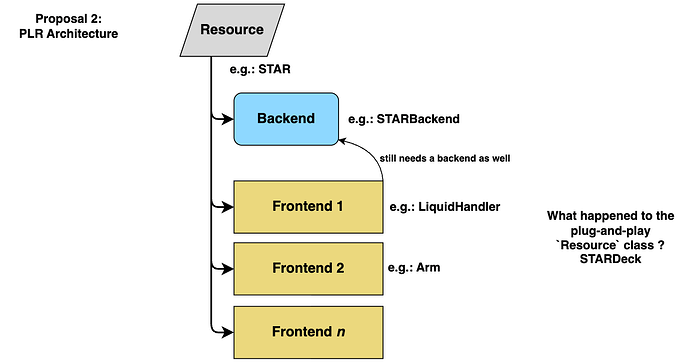

Option 2: Machine resources having “front ends” as attributes

This one is a more radical change.

Pseudocode example:

class STAR(Resource):

def __init__(self):

super().__init__(name="STAR", size_x=0, size_y=0, size_z=0)

self.backend = STARBackend()

self.lh = LiquidHandler(backend=self.backend)

self.arm = Arm(backend=self.backend)

# simple operations

star = STAR()

star.setup()

star.lh.aspirate(...)

star.arm.move_plate(...)

assert star.lh._backend is star.backend # True

# backend specific methods

star.backend.special_method()

# functions

read_plate_byonoy(arm=star.arm, ...)

This is nice when machines have multiple instances of a machine front end:

read_plate_byonoy(arm=evo.arms[1], ...)

For machines with optional controls such as cytomats, this is nice:

class Cytomat(Resource):

def __init__(self, with_environment: bool = True, ...):

super().__init__(name="Cytomat", size_x=0, size_y=0, size_z=0)

self.backend = CytomatBackend()

self.automated_storage = AutomatedStorage(backend=self.backend)

if with_environment:

self.temperature_controller = TemperatureController(backend=self.backend)

else:

self.temperature_controller = None

The goal with being “universal” in PLR is to make it easy to reuse code across machines: plate definitions, utility functions, potentially even entire protocols. However, a true “universal” / identical code architecture is not feasible given how different these machines turn out to be. Again, what we should build are tools that are amenable to being shared across all machines.

This would quite a radical change with either option. I think this is the right time to get these in before we do a versioned beta.

Curious to hear what you think.